Tag: clinical AI

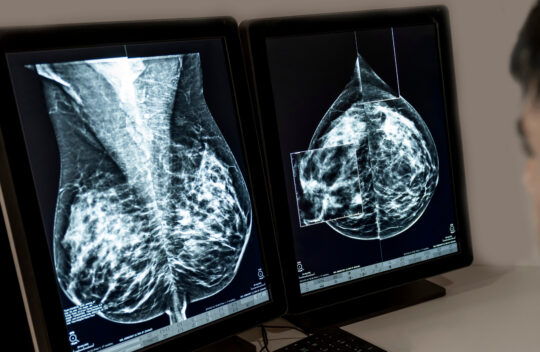

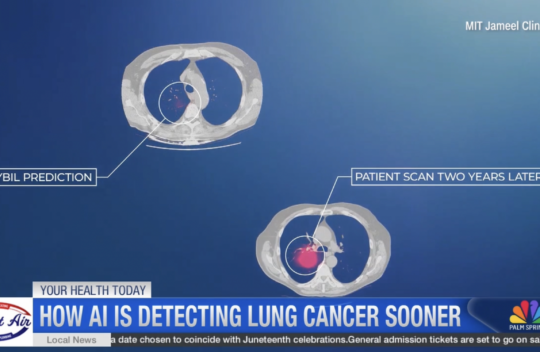

The Future of Early Detection: New MIT AI Tool Predicts Lung Cancer Risk Years Before Tumors Appear

Lung cancer remains the primary cause of cancer-related deaths in the United States for both men and women. While early detection is critical for survival, a significant gap exists in current screening methods. A new artificial intelligence tool is now offering a window into the future, helping clinicians identify high-risk patients years before a tumor is visible on a scan. The Current Screening Gap Standard lung cancer screening currently involves a low-dose CT scan. However, data shows that only about 20 percent of eligible individuals actually undergo these checks. Furthermore, historical guidelines have been restrictive. Until recently, CT scans were typically warranted only for adults aged 50 to 80 with a heavy smoking history. This narrow criteria has meant that half of all people diagnosed with lung cancer in the United States every year would not have met the standard requirements for screening. A New Tool for Prediction Doctors at the Mass General Brigham Cancer Institute, in collaboration with engineers at MIT, have developed an AI tool called Sybil to address this disparity. Sybil is designed to analyze a single CT scan and generate a personalized risk score. This score predicts the likelihood of a person developing lung cancer over any period up to six years. Validation studies reported that Sybil is between 86 and 94 percent accurate in distinguishing between high-risk and low-risk patients within a one-year window. The technology achieves this through advanced pattern recognition. By training on tens of thousands of previous scans, the AI identifies biological signals and imaging patterns that are invisible to the human eye. Learn more

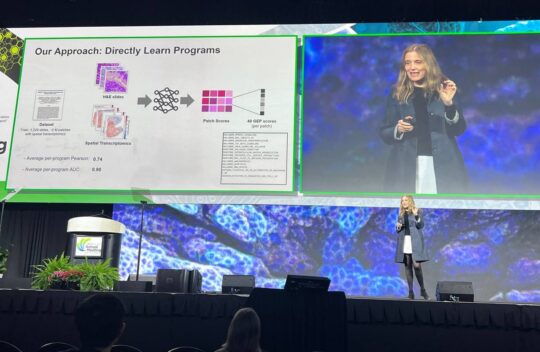

On algorithms, life, and learning

From enhancing international business logistics to freeing up more hospital beds to helping farmers, MIT Professor Dimitris Bertsimas SM ’87, PhD ’88 summarized how his work in operations research has helped drive real-world improvements, while delivering the 54th annual James R. Killian Faculty Achievement Award Lecture at MIT on Thursday, March 19.Bertsimas also described how artificial intelligence is now being used in some of his scholarly projects and as a tool in MIT Open Learning efforts, which he currently directs — another facet of a highly productive and lauded career over four decades at the Institute. The Killian Award is the highest prize MIT gives its faculty.

“I have tried to improve the human condition,” Bertsimas said, summarizing the breadth of his work and the many applications to everyday living that he has found for it. Learn more

A better method for identifying overconfident large language models

Large language models (LLMs) can generate credible but inaccurate responses, so researchers have developed uncertainty quantification methods to check the reliability of predictions. One popular method involves submitting the same prompt multiple times to see if the model generates the same answer.But this method measures self-confidence, and even the most impressive LLM might be confidently wrong. Overconfidence can mislead users about the accuracy of a prediction, which might result in devastating consequences in high-stakes settings like health care or finance.

To address this shortcoming, MIT researchers introduced a new method for measuring a different type of uncertainty that more reliably identifies confident but incorrect LLM responses. Learn more