Tag: clinical AI

One Survivor’s AI Breakthrough Predicts Cancer Years Ahead

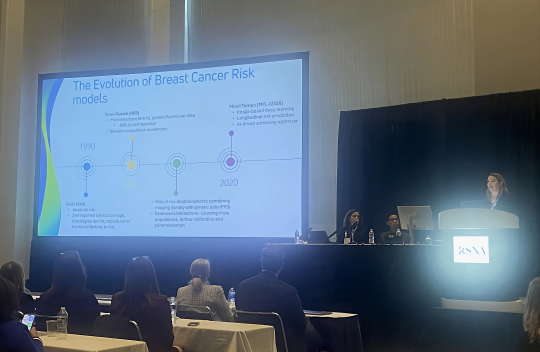

AI Decoded focusses on one of the most urgent, tangible uses of artificial intelligence: health care — we speak to Dr Regina Barzilay, an MIT professor who is building machine-learning AI models to predict disease. She herself was diagnosed with breast cancer in 2014, and has used that experience and knowledge to target her research towards prevention — the AI model she and her team built, named MIRAI, is now able to detect a patient’s risk of developing breast cancer within five years. Are we on the brink of a revolution in treating cancer for everyone? Find out on AI Decoded... Joining presenter Christian Fraser is AI Decoded co-host Stephanie Hare and the BBC's AI correspondent Marc Cieslak Learn more

Marketwatch25: She survived breast cancer. Now her AI tool could help you skip annual mammograms.

As an MIT computer-science professor, Regina Barzilay was used to living on the bleeding edge of innovation, teaching computers to understand words in the nascent field of natural language processing. But when she was diagnosed with breast cancer in 2014, she was thrust into a different and, as she describes it, “really backwards” technological world. Learn more

AI steps in to detect the world’s deadliest infectious disease

As a professor and computer scientist at MIT, Regina Barzilay has spent years building AI models to detect breast cancer and lung cancer. Then, when a hospital in Sri Lanka told her it couldn't afford to buy off-the-shelf AI models for TB screenings, she agreed to build one for them.As she got to work this past year, she says, she immediately understood why TB is at the vanguard of the global health challenges with AI solutions.

"You can see TB. TB is visual. You have an x-ray. You have a label which says whether they have it or not — and you just train the model," Barzilay says, adding that it only took her a few months and less than $50,000 to make her model. "It's straightforward, very cheap, very fast to develop." Learn more